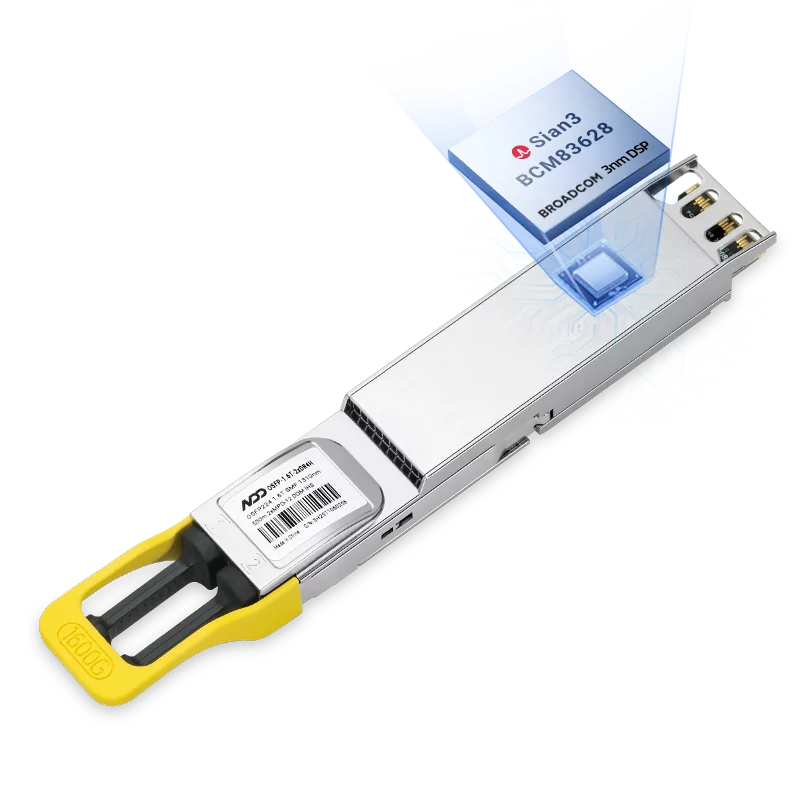

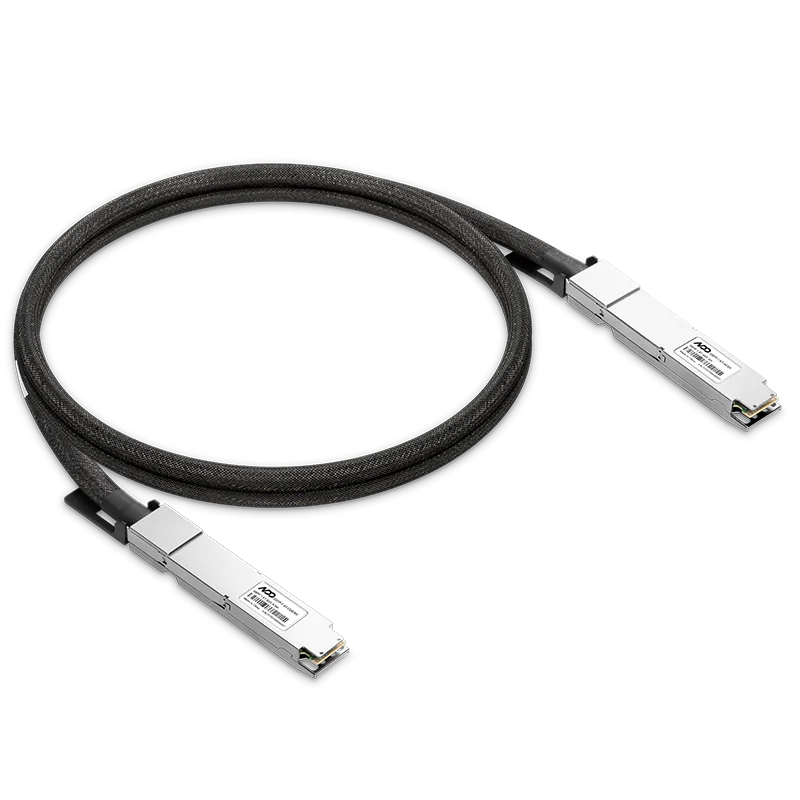

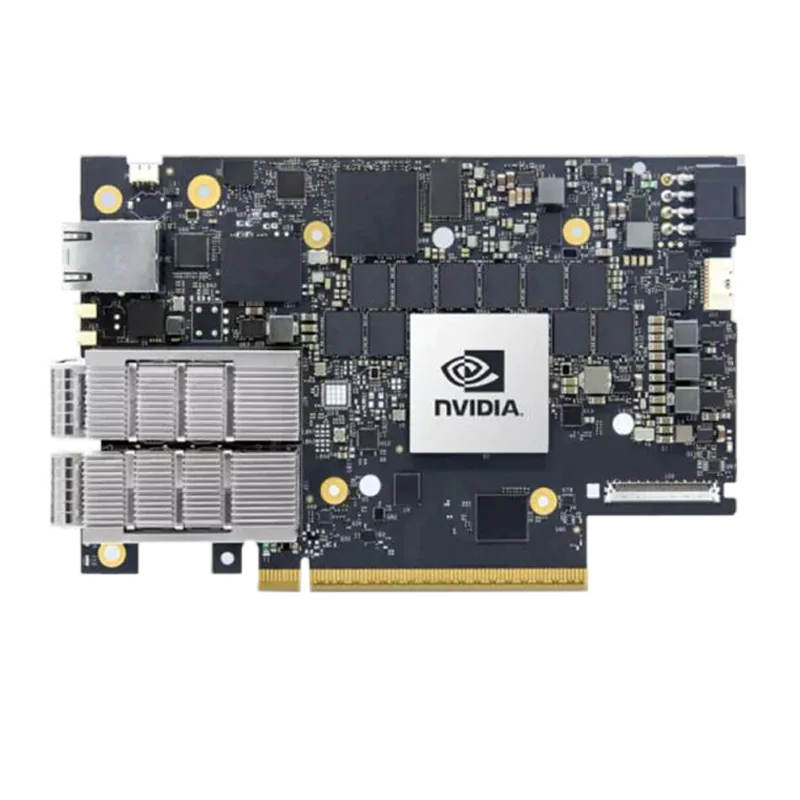

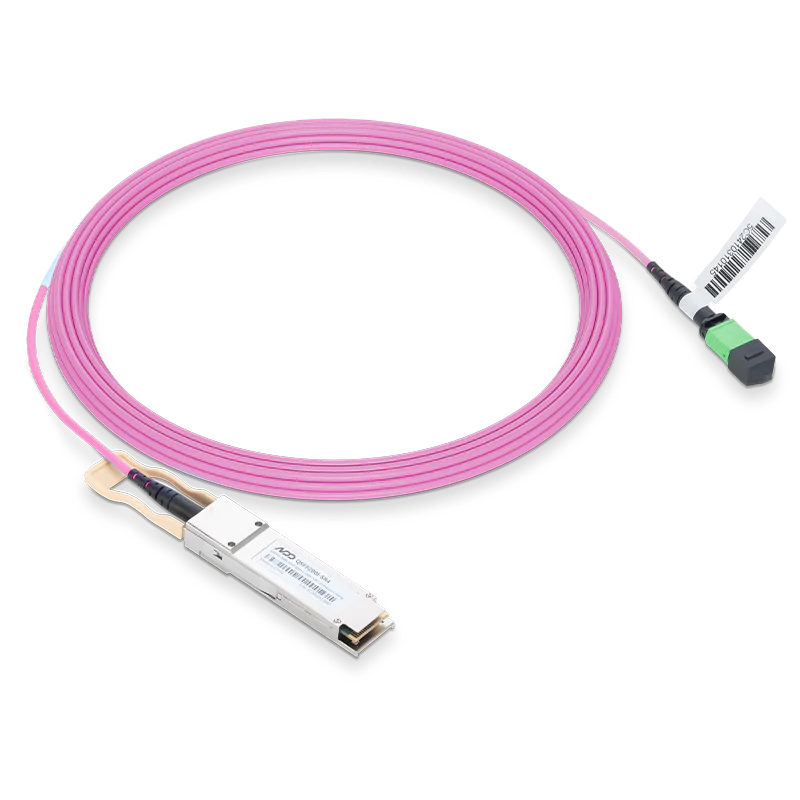

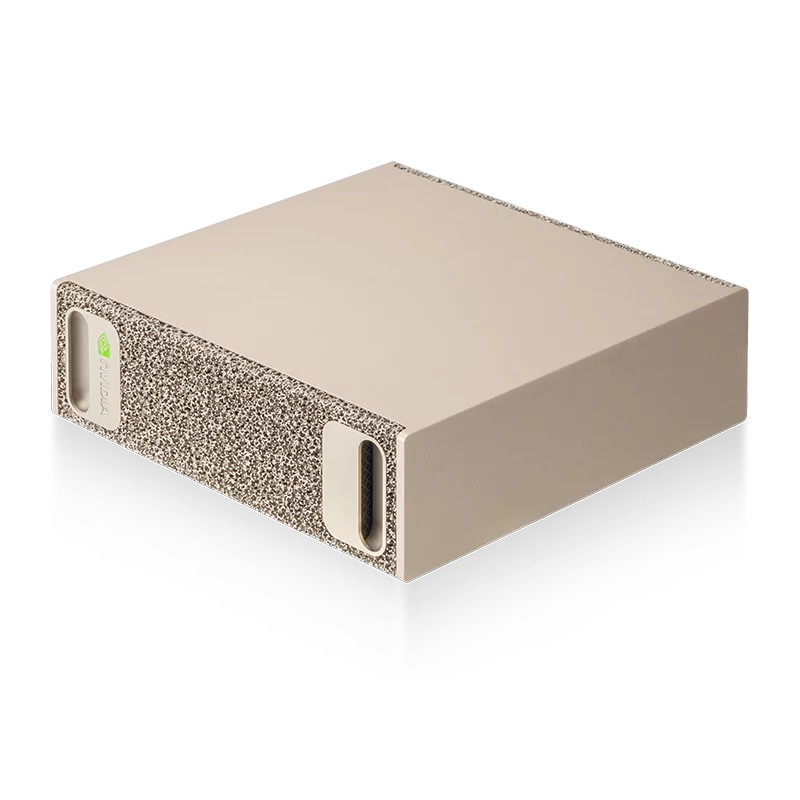

NADDOD's 200G InfiniBand HDR AOC provides exactly the same technology and performance standards including low power consumption, high bandwidth, high density, lowest latency, and insertion loss as NVIDIA/Mellanox for full compatibility with InfiniBand systems. Bandwidth tests and latency tests in the HDR network environment are verified by NVIDIA/Mellanox equipment connection, and the practical cases of supercomputing application solutions associated with InfiniBand products of NADDOD service are verified to be fully comparable to the performance and quality of the original.

5.0

5.0