Find the best fit for your network needs

share:

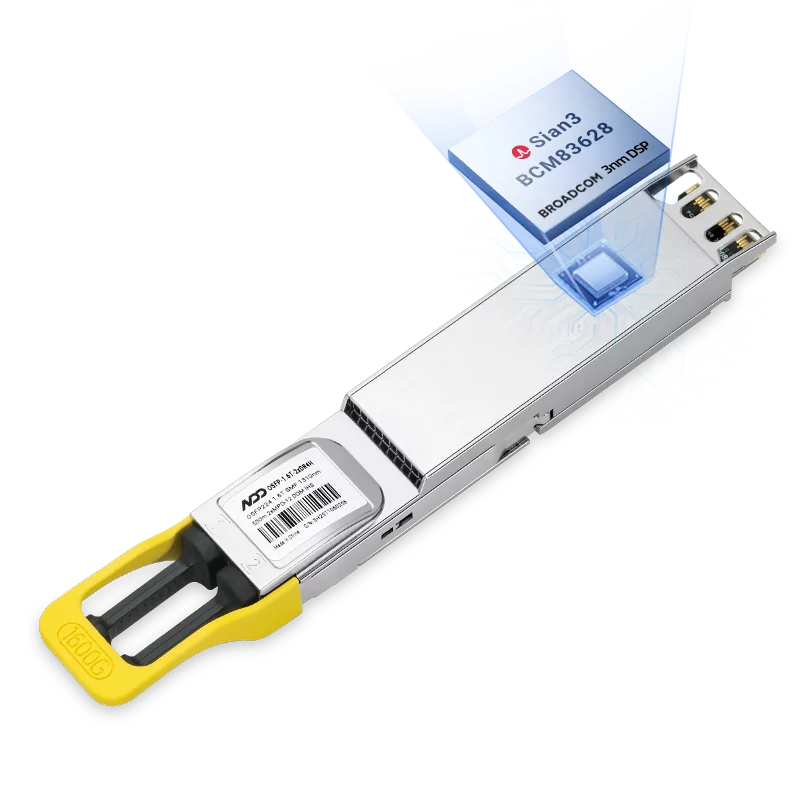

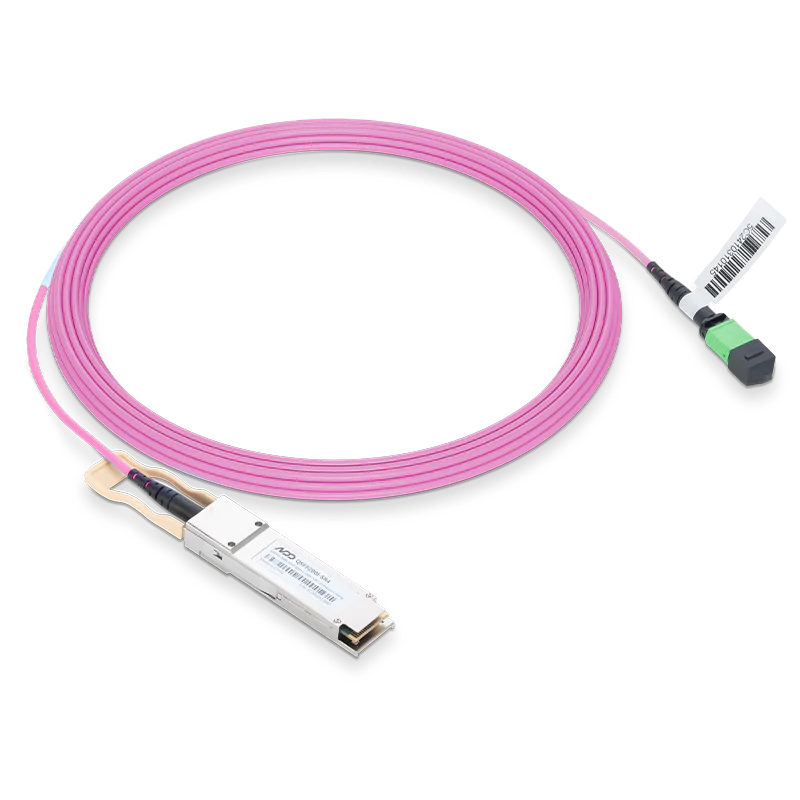

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF ModuleLearn More

Popular

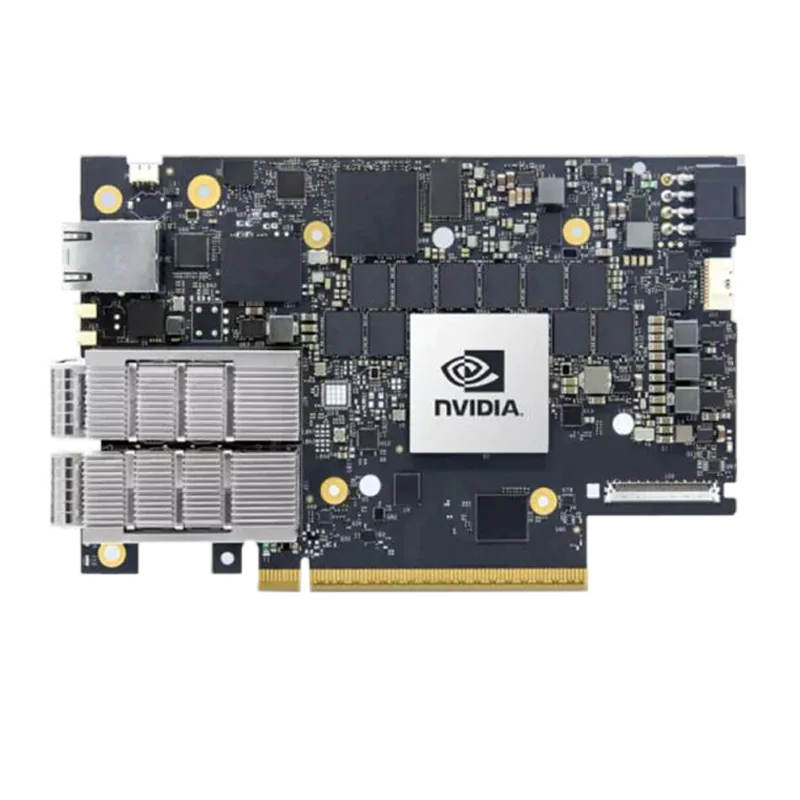

- 1InfiniBand Network Technology for HPC and AI: In-Network Computing

- 2Why Is InfiniBand Used in HPC?

- 3Perfect Solution for 25G Bidirectional ER 40km: 25G BiDi 1289nm/1314nm

- 4NADDOD Optical Products Were Deployed in GSI Data Center Upgrade Project

- 5NADDOD Optical Connectivity Enabled Independent and Contrablable Data Transmission for a Navy Project