Find the best fit for your network needs

share:

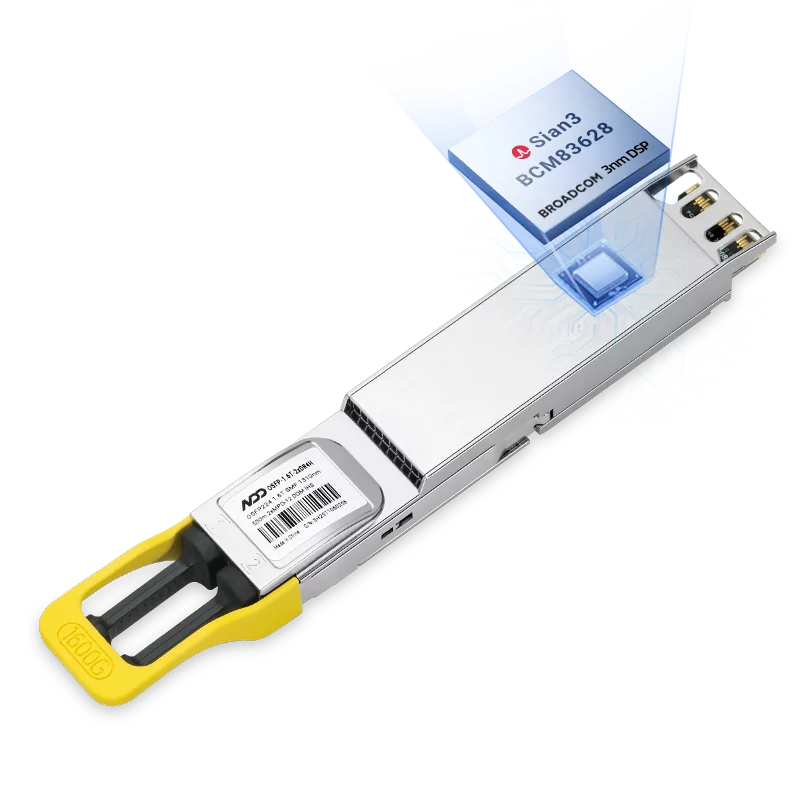

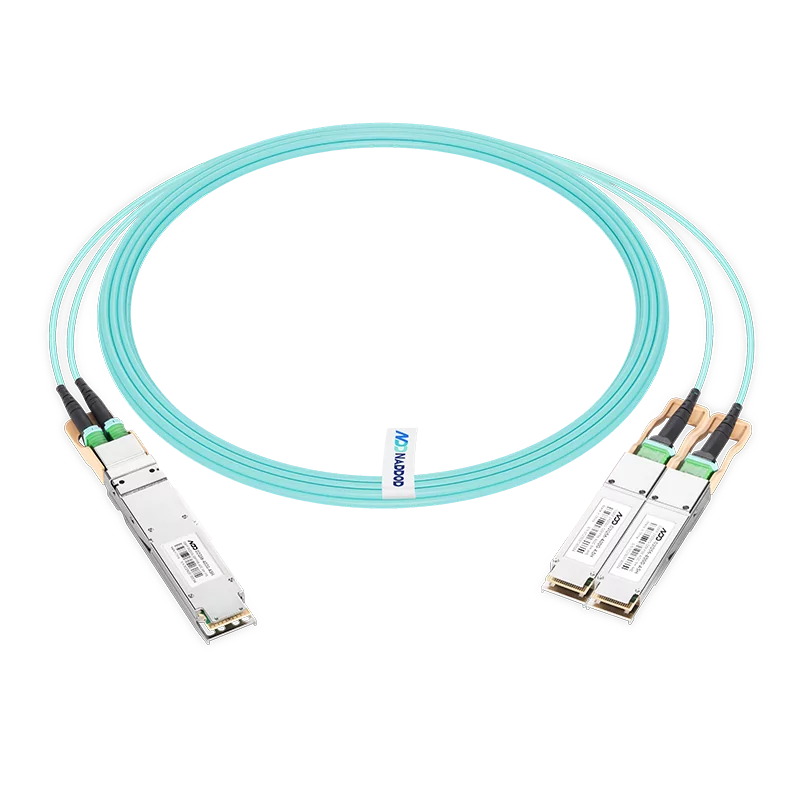

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF ModuleLearn More

Popular

- 1Ethernet: The Road to Singularity - Modernized RDMA

- 2Naddod 400G QSFP-DD SR4: Exclusive Design Empowering Data Center Interconnect

- 3NADDOD 400G/800G Optical Module Boosts AI Computing Power Acceleration

- 4The Key Role of High-quality Optical Transceivers in AI Networks

- 5Common Problems While Using Optical Transceivers in AI Clusters