What is Relationship Between Switches & AI?

Neo Switch Specialist Aug 8, 2023

Neo Switch Specialist Aug 8, 2023 1. What is a Protocol?

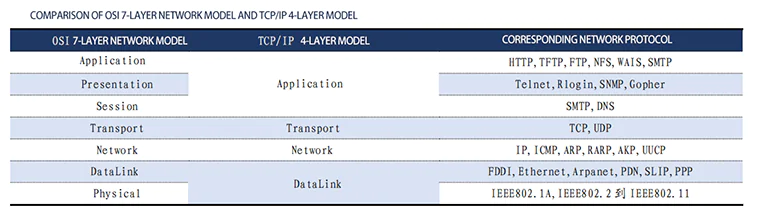

A protocol is a set of rules, standards, or agreements established for data exchange in a computer network. From a legal perspective, the OSI (Open System Interconnection) seven-layer protocol is an international standard. In the 1980s, the OSI protocol was proposed to standardize the communication between computers and meet the needs of open networks. It adopted a seven-layer network model.

The physical layer addresses how hardware communicates and defines standards for physical devices, such as interface types and transmission rates, to facilitate the transmission of bit streams (data streams represented by 0s and 1s).

The data link layer primarily deals with frame encoding and error correction control. It receives data from the physical layer, encapsulates it into frames, and transmits them to the upper layer. It can also break down data from the network layer into bit streams for transmission to the physical layer. It includes error detection and correction mechanisms through checksums.

The network layer establishes logical circuits between nodes and uses IP to find addresses (each node in the network has an IP address). It transmits data in packets.

The transport layer is responsible for supervising the quality of data transmission between two nodes in the network. It ensures data arrives in the correct order and handles issues such as loss, duplication, and congestion control.

The session layer manages session connections in network devices. Its main function is to provide session control and synchronization, allowing coordination of communication between different devices.

The presentation layer is responsible for data format conversion and encryption/decryption operations. It ensures that data can be correctly interpreted and processed by applications on different devices.

The application layer provides direct network services and application interfaces to users. It includes various applications such as email, file transfer, and remote login.

These layers together form the OSI seven-layer model, with each layer having specific functions and responsibilities to facilitate communication and data exchange between computers.

It's important to note that real-world network protocols may not strictly adhere to the OSI model and may be designed and implemented based on practical requirements and network architectures.

Regarding TCP/IP, it is a protocol suite that includes various protocols and can be roughly divided into four layers: the application layer, transport layer, network layer, and data link layer. TCP/IP can be seen as an optimized version of the OSI seven-layer protocol.

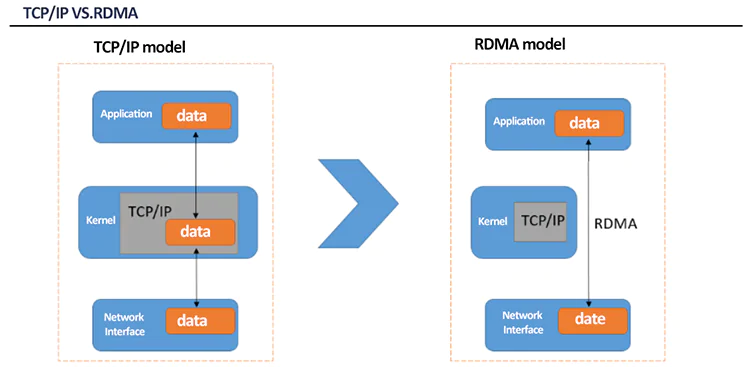

With regard to High-Performance Computing (HPC) and its requirements for high throughput and low latency, TCP/IP has gradually transitioned to RDMA (Remote Direct Memory Access). TCP/IP has a few main drawbacks:

It introduces several tens of microseconds of latency. Due to the need for multiple context switches and CPU involvement in encapsulation during transmission, TCP/IP protocol stacks have relatively long latency.

It imposes significant CPU overhead. TCP/IP networks require the host CPU to participate in multiple memory copies within the protocol stack, resulting in high CPU load that is strongly correlated with network bandwidth.

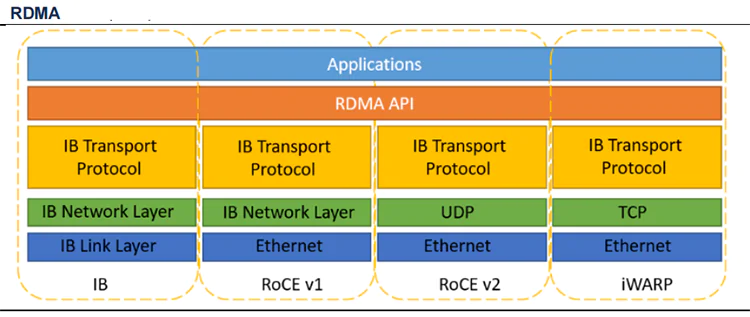

RDMA (Remote Direct Memory Access) is a technology that allows direct access to memory data over a network interface without the involvement of the operating system kernel. It enables high-throughput, low-latency network communication, making it particularly suitable for use in large-scale parallel computing clusters.

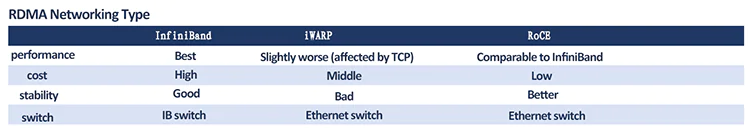

RDMA does not specify the entire protocol stack but imposes high requirements on specific transports, such as minimal packet loss, high throughput, and low latency. There are different branches within RDMA. InfiniBand, for example, is designed specifically for RDMA and ensures reliable transmission at the hardware level. It is technologically advanced but comes with high costs. On the other hand, RoCE (RDMA over Converged Ethernet) and iWARP (Internet Wide Area RDMA Protocol) are both RDMA technologies based on Ethernet.

2. What is the Role of Switches in Data Center Architecture?

Switches and routers operate at different layers of the network. A switch works at the data link layer, using MAC addresses to identify devices and performing packet forwarding. It allows communication between different devices. A router, also known as a gateway, operates at the network layer, facilitating connectivity by using IP addressing to connect different subnetworks.

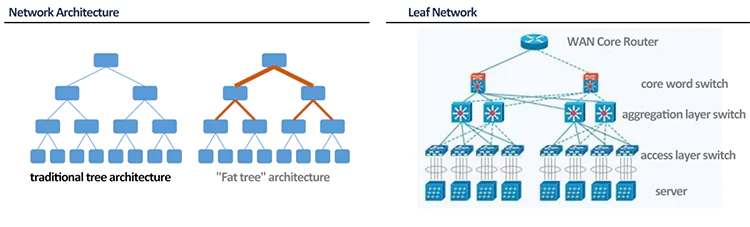

Traditional data centers often employ a three-tier architecture, consisting of the access layer, aggregation layer, and core layer. The access layer is typically directly connected to servers, with the commonly used access switches being Top of Rack (TOR) switches. The aggregation layer serves as an intermediary between the access layer and the core layer. The core switch provides forwarding for traffic entering and leaving the data center and connectivity to the aggregation layer.

Traditional three-tier network architectures have significant drawbacks, which become more pronounced with the development of cloud computing:

- Bandwidth waste:Each group of aggregation switches manages a Point of Delivery (POD), and each POD has independent VLAN networks. Spanning Tree Protocol (STP) is often used between aggregation switches and access switches. STP allows only one aggregation switch to be active for a VLAN network, while others are blocked. This prevents horizontal scalability of the aggregation layer.

- Large failure domain: Due to the algorithm used by STP, network topology changes require convergence, which can lead to network disruptions.

- High latency:As data centers grow, the increase in east-west traffic results in significant latency as communication between servers in a three-tier architecture needs to traverse multiple switches. The core switch and aggregation switches face increasing workloads, and upgrading their performance leads to increased costs.

Leaf-spine architecture offers significant advantages, including flattened design, low latency, and high bandwidth. In a leaf-spine network, leaf switches serve a similar purpose as traditional access switches, while spine switches act as the core switches.

Leaf and spine switches dynamically select multiple paths using Equal Cost Multi-Path (ECMP). When there are no bottlenecks in the access ports and uplink links of the leaf layer, this architecture achieves non-blocking performance. Since each leaf in the fabric connects to every spine, if one spine encounters an issue, the data center's throughput performance only experiences a slight degradation.

3. Is NVIDIA Switch the Same as an IB Switch?

The answers is NO same.

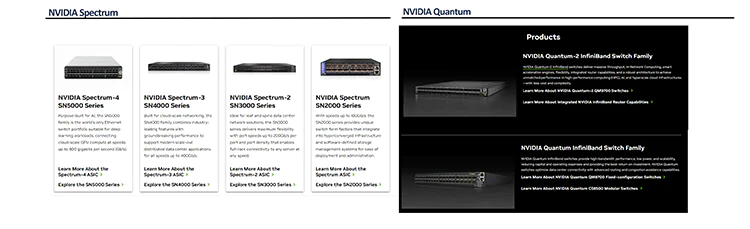

NVIDIA Spectrum and Quantum platforms encompass both Ethernet and InfiniBand (IB) switches. Mellanox, a company acquired by NVIDIA in 2020, primarily operates IB switches. Additionally, the Ethernet switches in the NVIDIA Spectrum platform are primarily based on Ethernet and have continuous product iterations. The Spectrum-4, released in 2022, is a 400G Ethernet switch product.

Spectrum-X is designed specifically for generative AI and aims to optimize the limitations of traditional Ethernet switches. The key elements of the NVIDIA Spectrum-X platform are the NVIDIA Spectrum-4 Ethernet switch and the NVIDIA BlueField-3 DPU.

The main advantages of Spectrum-X include:

- Extending RoCE for AI and Adaptive Routing (AR) to achieve maximum performance of the NVIDIA Collective Communications Library (NCCL). NVIDIA Spectrum-X can achieve up to 95% effective bandwidth in ultra-scale system workloads and scales.

- Utilizing performance isolation to ensure that one job does not impact another in multi-tenant and multi-job environments.

- Ensuring that the network architecture continues to provide the highest performance even in the event of network component failures.

- Synchronizing with the BlueField-3 DPU to achieve optimal NCCL and AI performance.

- Maintaining consistent and stable performance across various AI workloads, which is crucial for meeting SLA requirements.

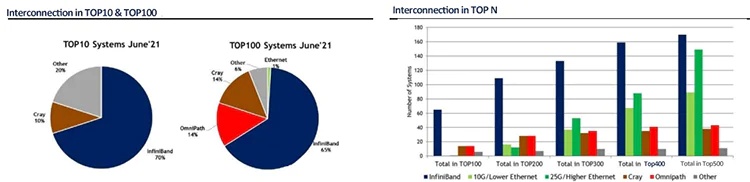

The choice between IB and Ethernet in network architecture is an important consideration. Currently, Ethernet holds the majority market share, but in large-scale computing scenarios, IB stands out. At the ISC 2021 supercomputing conference, IB accounted for 70% of the systems in the TOP10 and 65% in the TOP100. As the scope of consideration expands, the market share of IB decreases.

The Spectrum and Quantum platforms cater to different application scenarios. In NVIDIA's vision, AI application scenarios can be broadly categorized as AI Cloud and AI Factory. In AI Cloud, traditional Ethernet switches and Spectrum-X Ethernet can be used, while in AI Factory, an NVLink+InfiniBand solution is required.

4. How Should NVIDIA SuperPOD be Understood?

SuperPOD is a server cluster that provides high throughput performance by interconnecting multiple compute nodes.

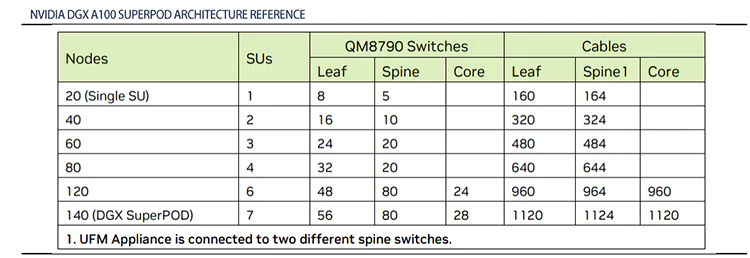

Taking the NVIDIA DGX A100 SuperPOD as an example, the recommended configuration includes the QM8790 switch, which provides 40 ports of 200G each. The architecture used is a fat tree (non-blocking) architecture. In the first layer, the DGX A100 servers have 8 interfaces, each connecting to one of the 8 leaf switches. A SuperPOD consists of 20 servers, forming an SU. Therefore, a total of 8 * SU servers are required. In the second layer architecture, since the network is non-blocking and the port speeds are consistent, the number of uplink ports provided by the spine switches should be greater than or equal to the number of downlink ports on the leaf switches. Therefore, 1 SU corresponds to 8 leaf switches and 5 spine switches, 2 SUs correspond to 16 leaf switches and 10 spine switches, and so on. Additionally, when the number of SUs exceeds 6, it is recommended to add a core layer switch.

In the DGX A100 SuperPOD, the server-to-switch ratio for the compute network is approximately 1:1.17 (based on 7 SUs). However, when considering the requirements for storage and network management, the server-to-switch ratio for DGX A100 SuperPOD and DGX H100 SuperPOD is approximately 1:1.34 and 1:0.50, respectively.

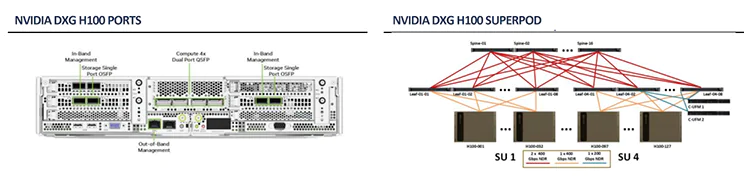

In terms of ports, the recommended configuration for DGX H100 includes 31 servers per SU. On one hand, DGX H100 only has 4 interfaces for compute purposes. On the other hand, the switch used in DGX H100 SuperPOD is the QM9700, which provides 64 ports of 400G each.

In terms of switch performance, the QM9700 in the DGX H100 SuperPOD's recommended configuration offers significant improvements. The InfiniBand switch introduces Sharp technology, which constructs Streaming Aggregation Trees (SAT) in the physical topology using the Aggregator Manager. Multiple switches in the tree perform parallel computations, resulting in reduced latency and improved network performance. The QM8700/8790+CX6 supports a maximum of 2 SATs, while the QM9700/9790+CX7 supports up to 64 SATs. With an increased number of ports, the switch count is reduced.

5. Switch Selection: Ethernet vs. InfiniBand

The essential difference between Ethernet switches and InfiniBand switches lies in the difference between the TCP/IP protocol and RDMA (Remote Direct Memory Access). Currently, Ethernet switches are more commonly used in traditional data centers, while InfiniBand switches are more prevalent in storage networks and HPC (High-Performance Computing) environments. Both Ethernet and InfiniBand switches can achieve a maximum bandwidth of 400G.

RoCE vs InfiniBand vs TCP/IP

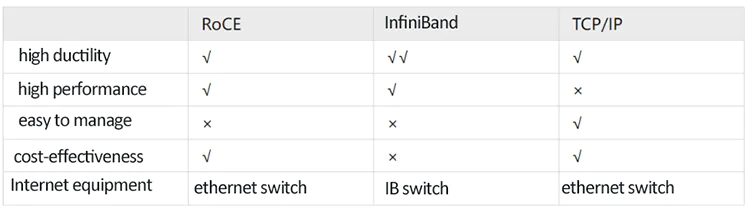

◼ High Scalability: All three network protocols exhibit high scalability and flexibility, with InfiniBand being the most scalable. A single InfiniBand subnet can support tens of thousands of nodes. Additionally, it provides a relatively simple and scalable architecture, allowing for virtually unlimited cluster sizes through InfiniBand routers.

◼ High Performance: Due to the additional CPU processing overhead and latency introduced by TCP/IP, it performs poorer in terms of performance compared to the other two network protocols. RoCE improves speed and power in enterprise data centers while reducing the total cost of ownership without requiring a replacement of the Ethernet infrastructure. InfiniBand, on the other hand, achieves faster and more efficient communication by transmitting data serially, one bit at a time, using a switched fabric.

◼ Ease of Management: While RoCE and InfiniBand offer lower latency and higher performance than TCP/IP, the latter is easier to deploy and manage. Network administrators using TCP/IP for device and network connectivity require minimal centralized management.

◼ Cost-Effectiveness: InfiniBand may not be a favorable choice for budget-constrained enterprise data centers. It utilizes expensive IB switch ports to carry a significant load of applications, adding to the computational, maintenance, and management costs for the enterprise. In contrast, RoCE and TCP/IP, which utilize Ethernet switches, offer a more cost-effective solution.

◼ Network Equipment: As shown in the table above, RoCE and TCP/IP utilize Ethernet switches for data transmission, while InfiniBand employs dedicated IB switches to carry applications. In typical scenarios, IB switches need to be interconnected with devices that support the IB protocol and are relatively closed and difficult to replace.

Data centers today require maximum bandwidth and extremely low latency from their underlying interconnect. In such cases, traditional TCP/IP network protocols fall short of meeting the data center's demands as they introduce CPU processing overhead and high latency.

For enterprises deciding between RoCE and InfiniBand, their unique requirements and cost considerations should be taken into account. If they prioritize the highest-performance network connectivity, InfiniBand would be the better choice. For those seeking optimal performance, ease of management, and cost-effectiveness, they should opt for RoCE in their data centers.

6. NADDOD InfiniBand & RoCE Solutions

No matter whether you choose RoCE or InfiniBand, NADDOD offers lossless network solutions based on these two network connection options, enabling users to build high-performance computing capabilities and lossless network environments. NADDOD can tailor the optimal solution based on specific application scenarios and user requirements, providing users with high bandwidth, low latency, and high-performance data transmission. This effectively addresses network bottlenecks, enhances network performance, and improves user experience.

NADDOD provides high-speed Ethernet and InfiniBand products, including InfiniBand HDR/NDR and RoCE 200G/400G AOCs, DACs, optical modules, and other products. These products offer excellent performance and cost-effectiveness, significantly enhancing customers' business acceleration capabilities. With a customer-centric approach, NADDOD consistently creates outstanding value for customers across various industries. Its high-quality products and solutions have earned customer trust and recognition, widely applied in high-performance computing, data centers, education and research, biomedical, finance, energy, autonomous driving, internet, manufacturing, telecommunications, and other industries and critical domains. We work closely with customers, providing them with reliable and efficient network technology to help them succeed in the digital age. Whether you choose InfiniBand or RoCE, NADDOD will be your trusted partner.