Find the best fit for your network needs

share:

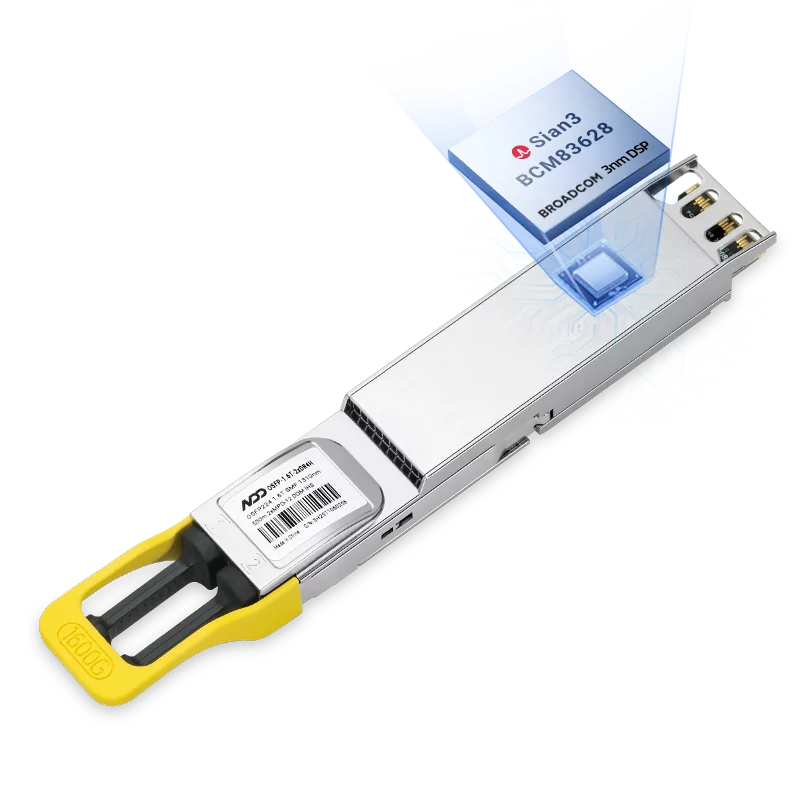

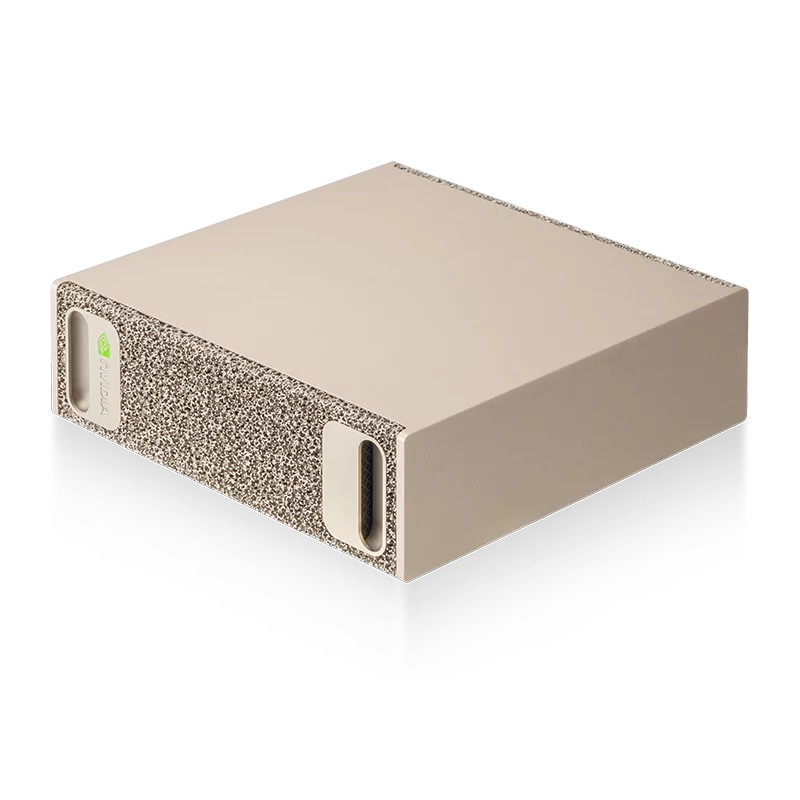

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF ModuleLearn More

Popular

- 1What are AI Inference Workloads? Why AI Inference Workloads Are Growing Rapidly

- 2Optimizing AI Inference Workloads: Reducing Latency, Boosting Throughput, and Cutting Costs

- 3Analysis of Prefix Caching in Large Language Model Inference

- 4InfiniBand vs RoCE for AI Inference Workloads: Performance, Cost, and Scalability

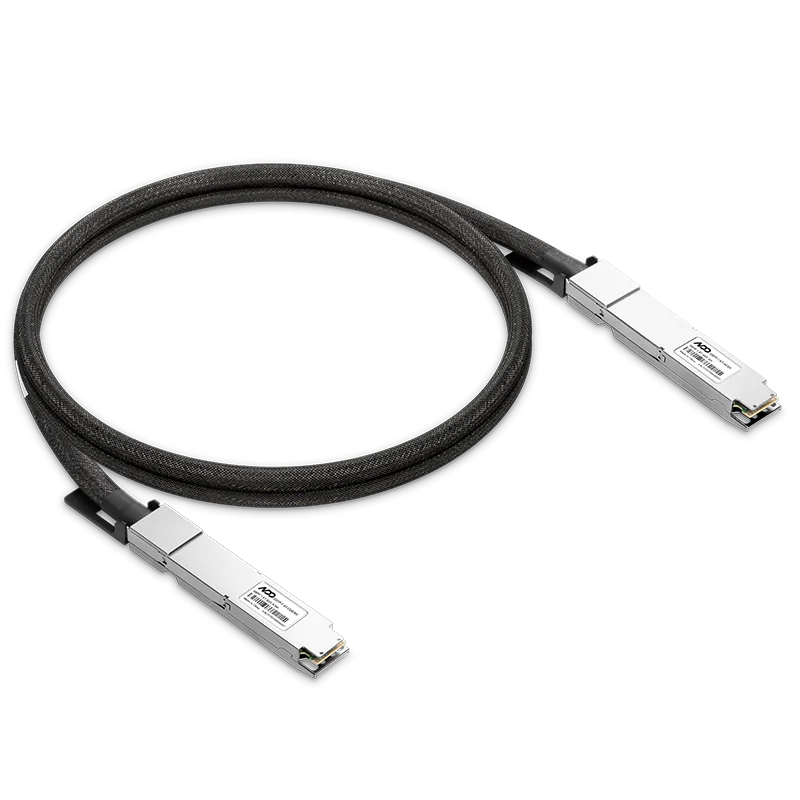

- 5DAC vs AOC vs Optical Transceiver: Which Interconnect Should You Choose For AI Inference Workloads