AI Networking

Training vs Inference: Why Your AI Network Architecture Needs to Be Different

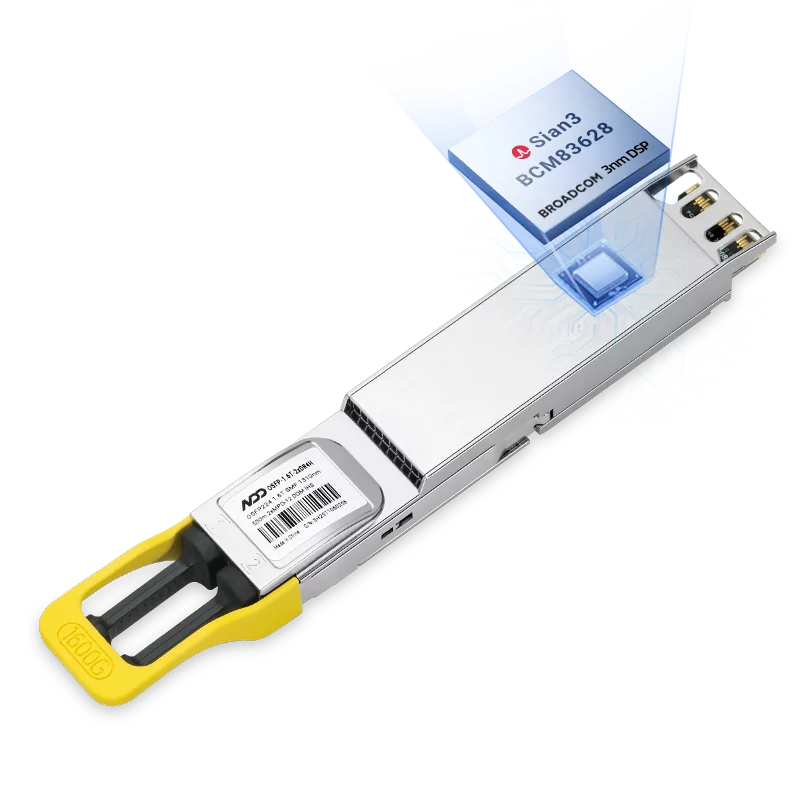

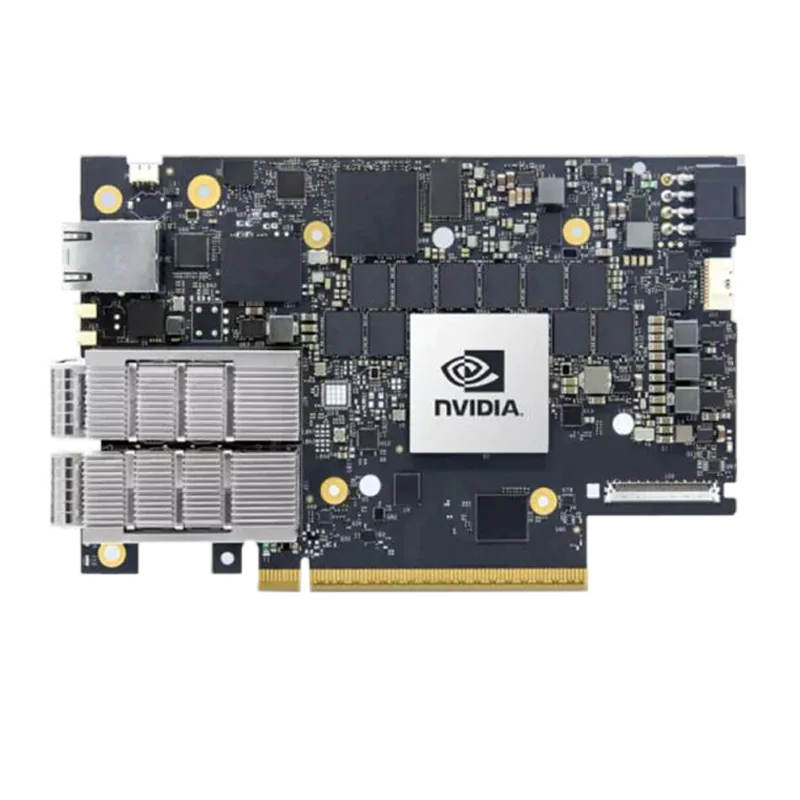

AI training and inference have fundamentally different network requirements. Learn how the shift from training to inference workloads is driving the rise of RoCE—and how NADDOD's RoCEv2 solutions deliver the performance, cost efficiency, and scalability your AI infrastructure needs.

Jason

JasonApr 3, 2026

.png)