Back to

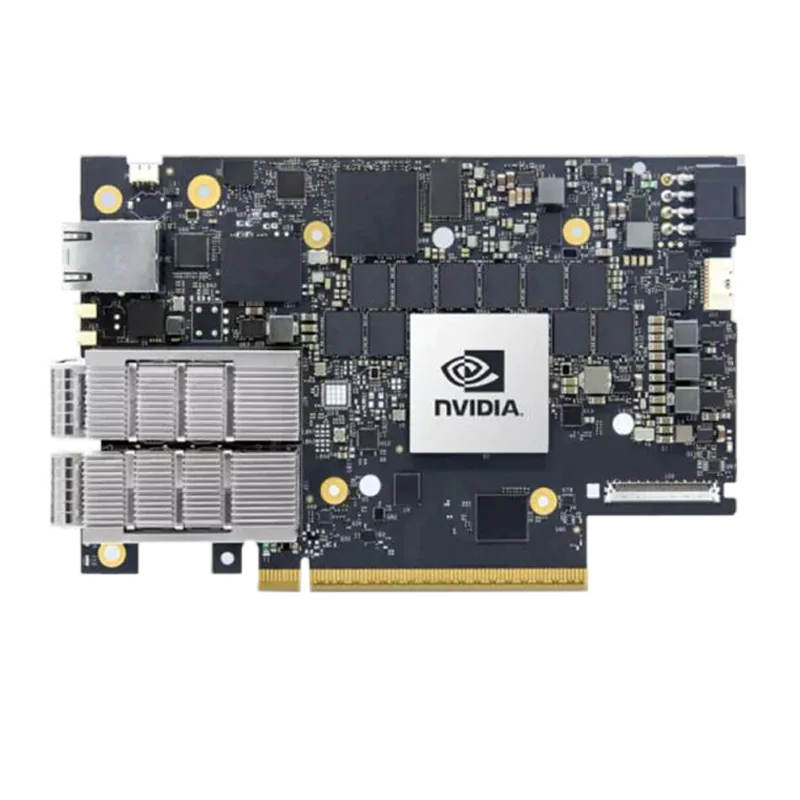

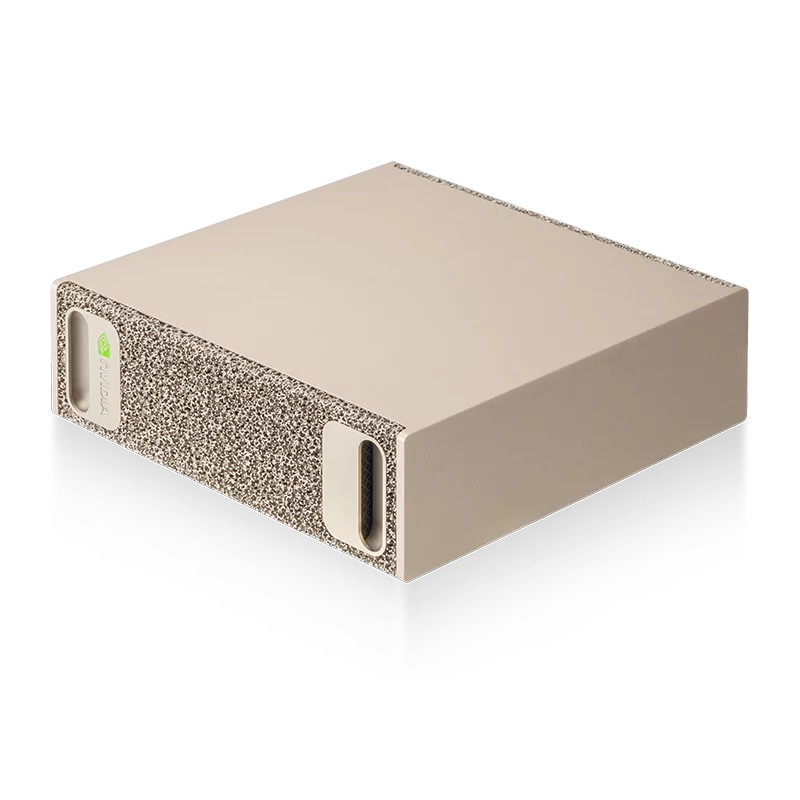

NVIDIA Groq 3 LPX: A Low-Latency Inference Accelerator Designed for the NVIDIA Vera Rubin Platform

Find the best fit for your network needs

share:

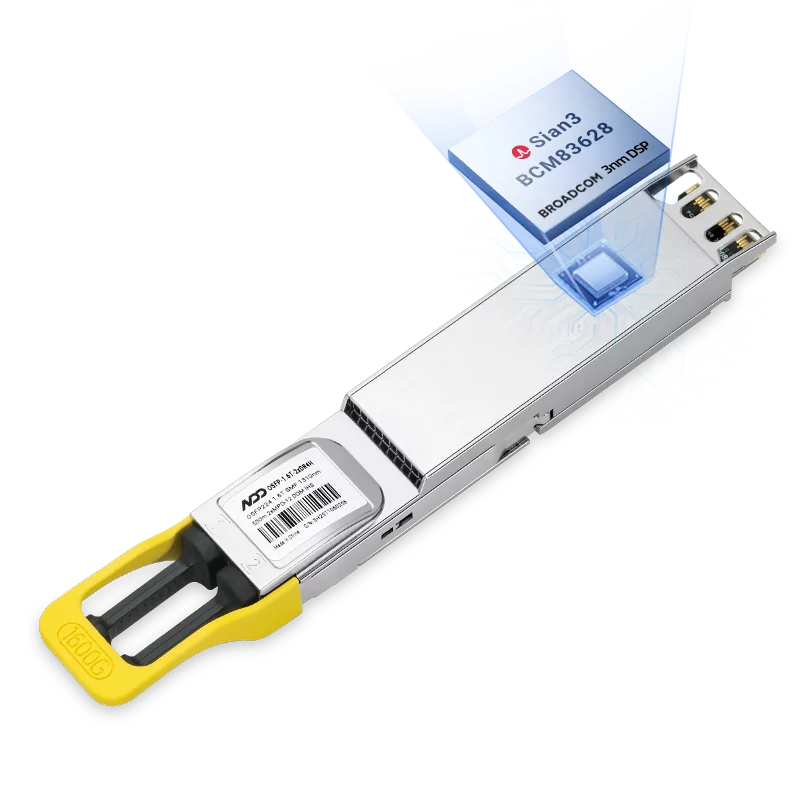

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF ModuleLearn More

Popular

- 1NVIDIA MGX Ecosystem: Building Modular Infrastructure for AI Factories

- 2NADDOD × DGX Spark × OpenClaw: A Practical Guide to Local AI Agent Cluster Deployment

- 3NVIDIA BlueField-4 STX Storage Architecture: Designed for an AI-Native Storage and Data Platform

- 4InfiniBand vs RoCE for AI Inference Workloads: Performance, Cost, and Scalability

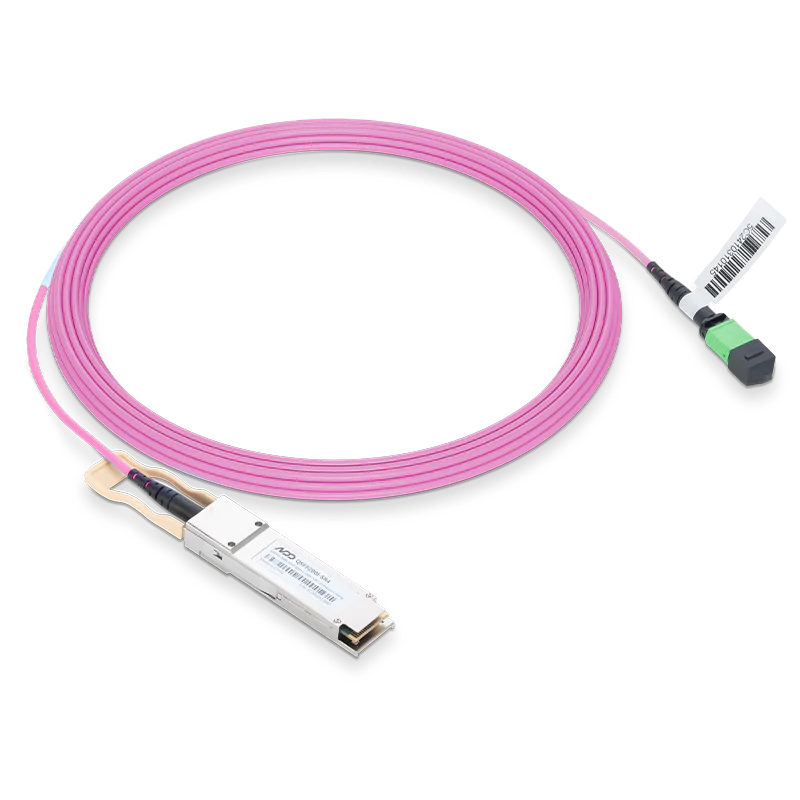

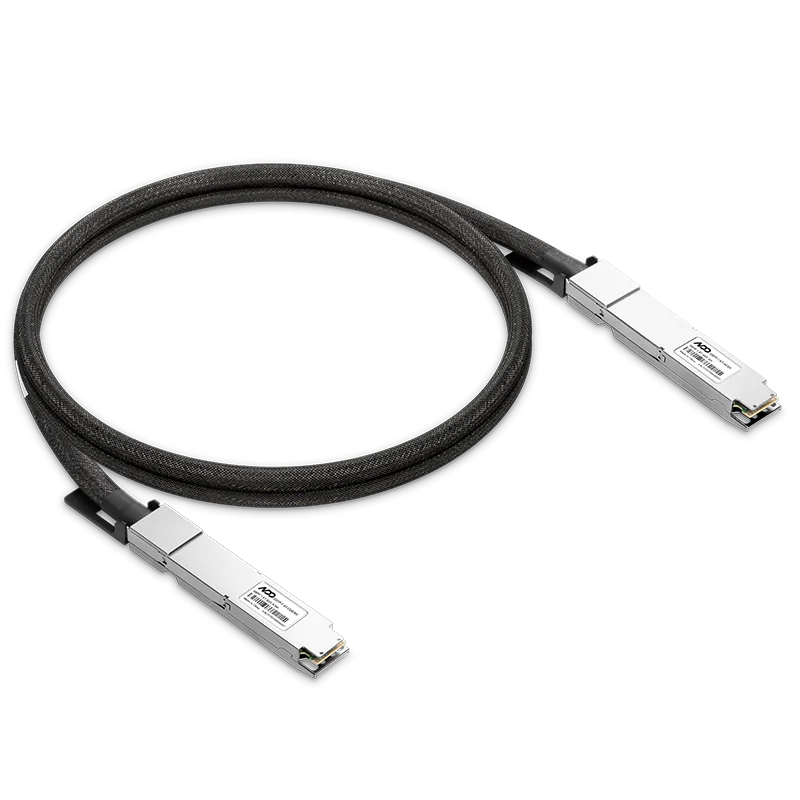

- 5DAC vs AOC vs Optical Transceiver: Which Interconnect Should You Choose For AI Inference Workloads