Find the best fit for your network needs

share:

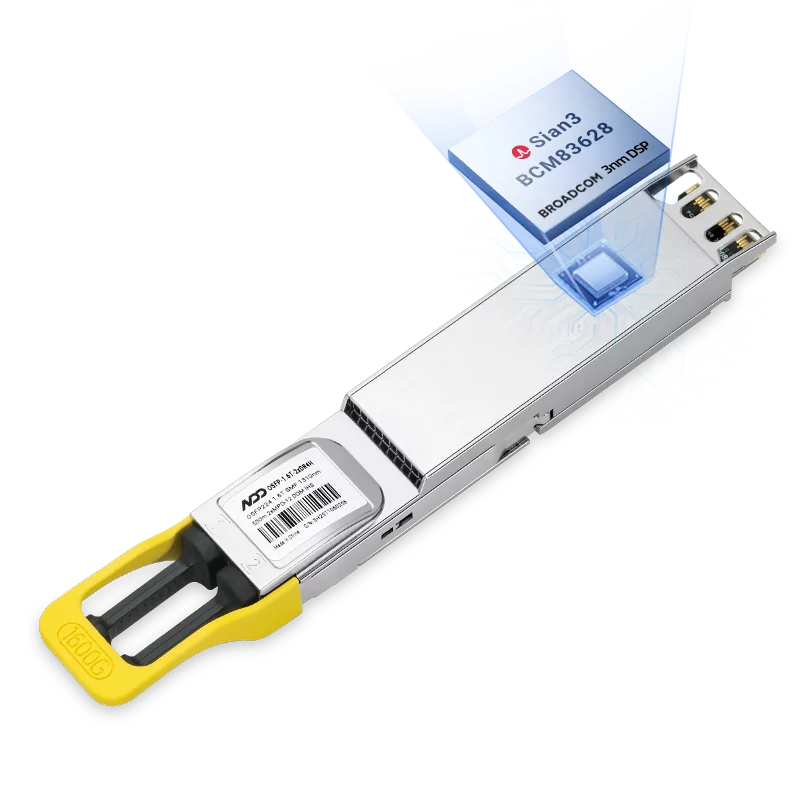

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF Module

800GBASE-2xSR4 OSFP PAM4 850nm 50m MMF ModuleLearn More

Popular

- 1Analysis of Prefix Caching in Large Language Model Inference

- 2Top5 Challenges in Large-Scale AI Inference Workloads

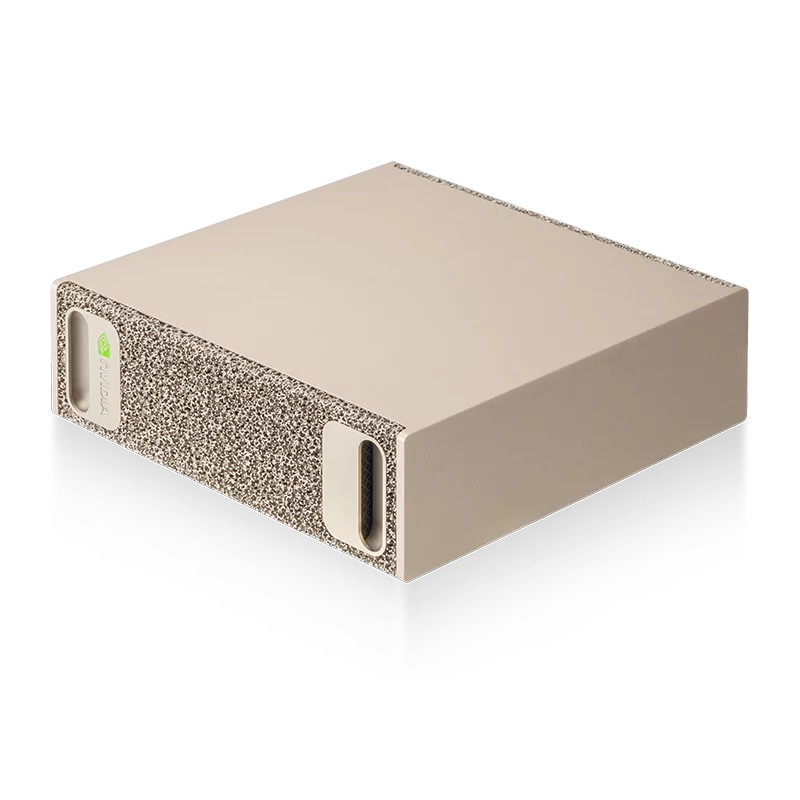

- 3NVIDIA DGX Rubin NVL8 Technical Analysis: AI Training and Inference Accelerator

- 4Optimizing AI Inference Workloads: Reducing Latency, Boosting Throughput, and Cutting Costs

- 5In-Depth Analysis of OCS: Optical-Layer Direct-Connect Switching Technology